This documentation provides a comprehensive guide to using Solo to launch a Hiero Consensus Node network, including setup instructions, usage guides, and information for developers. It covers everything from installation to advanced features and troubleshooting.

This is the multi-page printable view of this section. Click here to print.

Documentation

- 1: Simple Solo Setup

- 1.1: System Readiness

- 1.2: Quickstart

- 1.3: Managing Your Network

- 1.4: Cleanup

- 2: Advanced Solo Setup

- 2.1: Using Environment Variables

- 2.2: Network Deployments

- 2.2.1: One-shot Falcon Deployment

- 2.2.2: Falcon Values File Reference

- 2.2.3: Step-by-Step Manual Deployment

- 2.2.4: Dynamically add, update, and remove Consensus Nodes

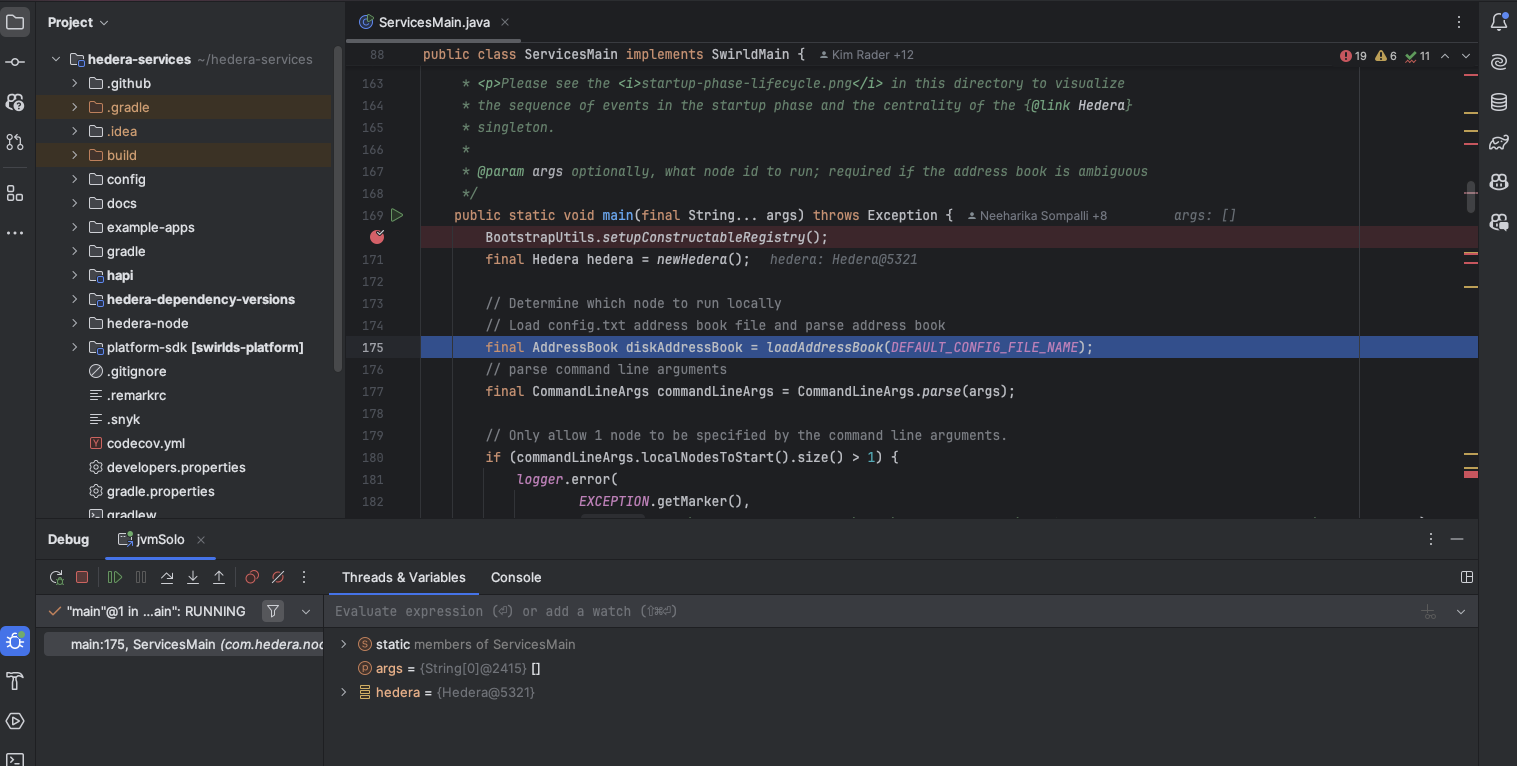

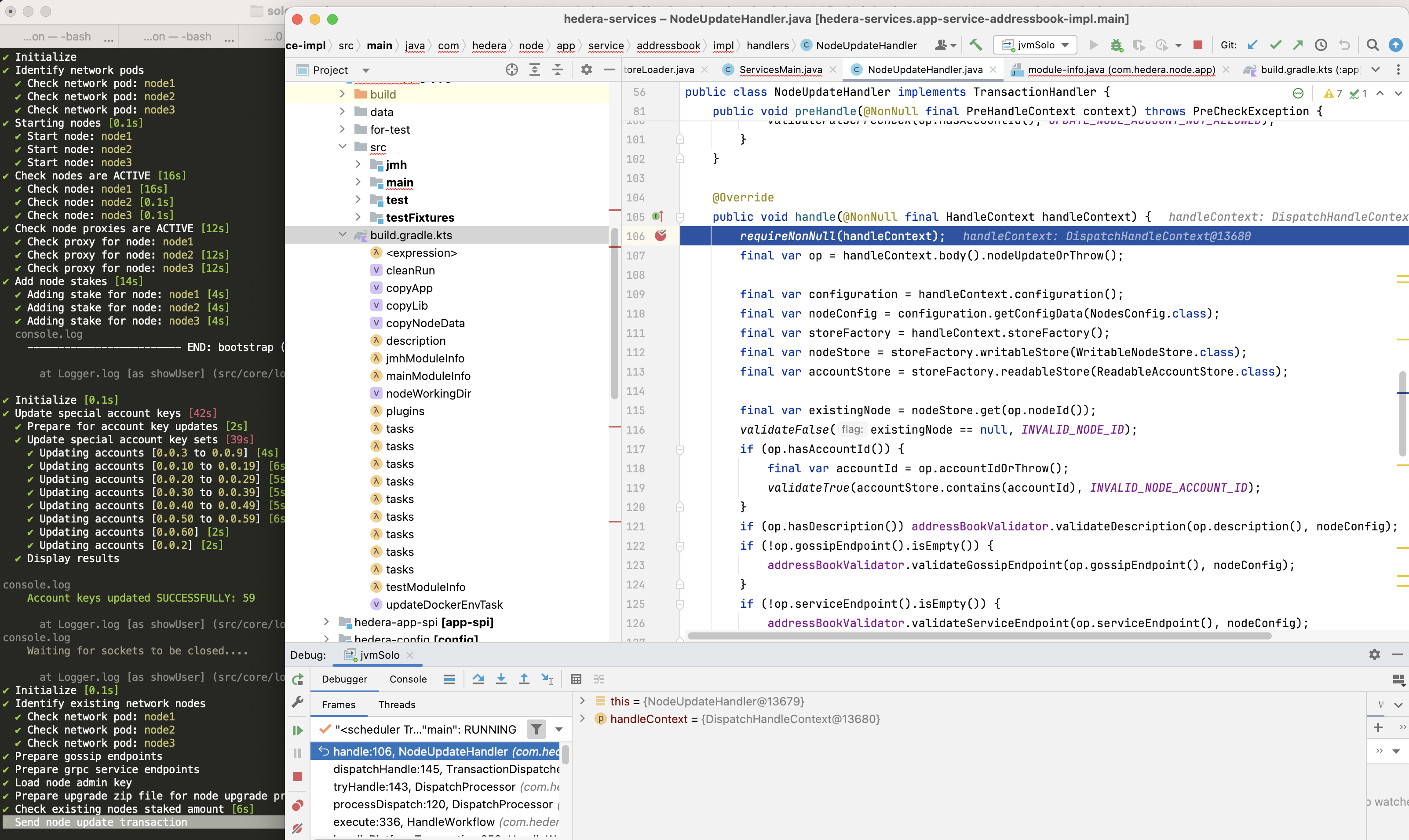

- 2.3: Attach JVM Debugger and Retrieve Logs

- 2.4: Customizing Solo with Tasks

- 2.5: Solo CI Workflow

- 2.6: CLI Reference

- 2.6.1: Solo CLI Reference

- 2.6.2: CLI Migration Reference

- 3: Using Solo

- 3.1: Accessing Solo Services

- 3.1.1: Using Solo with Mirror Node

- 3.2: Using Solo with Hiero JavaScript SDK

- 3.3: Using Solo with EVM Tools

- 3.4: Using Network Load Generator with Solo

- 4: Troubleshooting

- 5: Community Contributions

- 6: FAQs

1 - Simple Solo Setup

1.1 - System Readiness

Overview

Before you deploy a local Hiero test network with solo one-shot single deploy, your machine must meet specific hardware, operating system, and tooling requirements. This page walks you through the minimum and recommended memory, CPU, and storage, supported platforms (macOS, Linux, and Windows via WSL2), and the required versions of Docker/Podman, Node.js, and Kubernetes tooling. By the end of this page, you will have your container runtime installed, platform-specific settings configured, and all Solo prerequisites in place so you can move on to the Quickstart and create a local network with a single command.

Hardware Requirements

Solo’s resource requirements depend on your deployment size:

| Configuration | Minimum RAM | Recommended RAM | Minimum CPU | Minimum Storage |

|---|---|---|---|---|

| Single-node | 12 GB | 16 GB | 6 cores (8 recommended) | 20 GB free |

| Multi-node (3+ nodes) | 16 GB | 24 GB | 8 cores | 20 GB free |

Note: If you are using Docker Desktop, ensure the resource limits under Settings → Resources are set to at least these values - Docker caps usage independently of your machine’s total available memory.

Software Requirements

Solo manages most of its own dependencies depending on how you install it:

- Homebrew install (

brew install hiero-ledger/tools/solo) - automatically installs Node.js in addition to Solo. one-shotcommands — automatically install Kind, kubectl, Helm, and Podman (an alternative to Docker) if they are not already present.

You do not need to pre-install these tools manually before running Solo.

The only hard requirement before you begin is a container runtime - either Docker Desktop or Podman. Solo cannot install a container runtime on your behalf.

| Tool | Required Version | Where to get it |

|---|---|---|

| Node.js | >= 22.0.0 (lts/jod) | nodejs.org |

| Kind | >= v0.29.0 | kind.sigs.k8s.io |

| Kubernetes | >= v1.32.2 | Installed automatically by Kind |

| Kubectl | >= v1.32.2 | kubernetes.io |

| Helm | v3.14.2 | helm.sh |

| Docker | See Docker section below | docker.com |

| k9s (optional) | >= v0.27.4 | k9scli.io |

Docker

Solo requires Docker Desktop (macOS, Windows) or Docker Engine / Podman (Linux) with the following minimum resource allocation:

- Memory: at least 12 GB allocated to Docker.

- CPU: at least 6 cores allocated to Docker.

Configure Docker Desktop Resources

To allocate the required resources in Docker Desktop:

Open Docker Desktop.

Go to Settings > Resources > Memory and set it to at least 12 GB.

Go to Settings > Resources > CPU and set it to at least 6 cores.

Click Apply & Restart.

Note: If Docker Desktop does not have enough memory or CPU allocated, the one-shot deployment will fail or produce unhealthy pods.

Platform Setup

Solo supports macOS, Linux, and Windows via WSL2. Select your platform below to install the required container runtime and configure your environment, before proceeding to Quickstart:

Install Homebrew (if not already installed):

/bin/bash -c "$(curl -fsSL https://raw.githubusercontent.com/Homebrew/install/HEAD/install.sh)"Install Docker Desktop:

- Download from: https://www.docker.com/products/docker-desktop

- Start Docker Desktop and allocate at least 12 GB of memory:

- Docker Desktop > Settings > Resources > Memory

Remove existing npm-based installs:

[[ "$(command -v npm >/dev/null 2>&1 && echo 0 || echo 1)" -eq 0 ]] && { npm uninstall -g @hashgraph/solo >/dev/null 2>&1 || /bin/true }Install Solo (this installs all other dependencies automatically):

brew tap hiero-ledger/tools brew update brew install soloVerify the installation:

solo --version

Install Homebrew for Linux:

/bin/bash -c "$(curl -fsSL https://raw.githubusercontent.com/Homebrew/install/HEAD/install.sh)"Add Homebrew to your PATH:

echo 'eval "$(/home/linuxbrew/.linuxbrew/bin/brew shellenv)"' >> ~/.bashrc eval "$(/home/linuxbrew/.linuxbrew/bin/brew shellenv)"Install Docker Engine (for Ubuntu/Debian):

sudo apt-get update sudo apt-get install -y docker.io sudo systemctl enable docker sudo systemctl start docker sudo usermod -aG docker ${USER}Log out and back in for the group changes to take effect.

Install kubectl:

sudo apt update && sudo apt install -y ca-certificates curl ARCH="$(dpkg --print-architecture)" curl -fsSLo kubectl "https://dl.k8s.io/release/$(curl -L -s https://dl.k8s.io/release/stable.txt)/bin/linux/${ARCH}/kubectl" chmod +x kubectl sudo mv kubectl /usr/local/bin/kubectlRemove existing npm-based installs:

[[ "$(command -v npm >/dev/null 2>&1 && echo 0 || echo 1)" -eq 0 ]] && { npm uninstall -g @hashgraph/solo >/dev/null 2>&1 || /bin/true }Install Solo (this installs all other dependencies automatically):

brew tap hiero-ledger/tools brew update brew install soloVerify the installation:

solo --version

Run the following command in Windows PowerShell (as Administrator), then reboot and open the Ubuntu terminal. All subsequent commands must be run inside the Ubuntu (WSL2) terminal.

wsl --install UbuntuInstall Homebrew for Linux:

/bin/bash -c "$(curl -fsSL https://raw.githubusercontent.com/Homebrew/install/HEAD/install.sh)"Add Homebrew to your PATH:

echo 'eval "$(/home/linuxbrew/.linuxbrew/bin/brew shellenv)"' >> ~/.bashrc eval "$(/home/linuxbrew/.linuxbrew/bin/brew shellenv)"Install Docker Desktop for Windows:

- Download from: https://www.docker.com/products/docker-desktop

- Enable WSL2 integration: Docker Desktop > Settings > Resources > WSL Integration

- Allocate at least 12 GB of memory: Docker Desktop > Settings > Resources > Memory

Install kubectl:

sudo apt update && sudo apt install -y ca-certificates curl ARCH="$(dpkg --print-architecture)" curl -fsSLo kubectl "https://dl.k8s.io/release/$(curl -L -s https://dl.k8s.io/release/stable.txt)/bin/linux/${ARCH}/kubectl" chmod +x kubectl sudo mv kubectl /usr/local/bin/kubectlRemove existing npm-based installs:

[[ "$(command -v npm >/dev/null 2>&1 && echo 0 || echo 1)" -eq 0 ]] && { npm uninstall -g @hashgraph/solo >/dev/null 2>&1 || /bin/true }Install Solo (this installs all other dependencies automatically):

brew tap hiero-ledger/tools brew update brew install soloVerify the installation:

solo --version

Important: Always run Solo commands from the WSL2 terminal, not from Windows PowerShell or Command Prompt.

Alternative Installation: npm (for contributors and advanced users)

If you need more control over dependencies or are contributing to Solo development, you can install Solo via npm instead of Homebrew.

Note: Node.js >= 22.0.0 and Kind must be installed separately before using this method.

npm install -g @hashgraph/solo

Optional Tools

The following tools are not required but are recommended for monitoring and managing your local network:

k9s (

>= v0.27.4): A terminal-based UI for managing Kubernetes clusters. Install it with:brew install k9sRun

k9sto launch the cluster viewer.

Version Compatibility Reference

The table below shows the full compatibility matrix for the current and recent Solo releases:

| Solo Version | Node.js | Kind | Solo Chart | Hedera | Kubernetes | Kubectl | Helm | k9s | Docker Resources | Release Date | End of Support |

|---|---|---|---|---|---|---|---|---|---|---|---|

| 0.59.0 | >= 22.0.0 (lts/jod) | >= v0.29.0 | v0.62.0 | v0.71.0 | >= v1.32.2 | >= v1.32.2 | v3.14.2 | >= v0.27.4 | Memory >= 12 GB, CPU >= 6 cores | 2026-02-27 | 2026-03-27 |

| 0.58.0 (LTS) | >= 22.0.0 (lts/jod) | >= v0.29.0 | v0.62.0 | v0.71.0 | >= v1.32.2 | >= v1.32.2 | v3.14.2 | >= v0.27.4 | Memory >= 12 GB, CPU >= 6 cores | 2026-02-25 | 2026-05-25 |

| 0.57.0 | >= 22.0.0 (lts/jod) | >= v0.29.0 | v0.60.2 | v0.71.0 | >= v1.32.2 | >= v1.32.2 | v3.14.2 | >= v0.27.4 | Memory >= 12 GB, CPU >= 6 cores | 2026-02-19 | 2026-03-19 |

| 0.56.0 (LTS) | >= 22.0.0 (lts/jod) | >= v0.29.0 | v0.60.2 | v0.68.7-rc.1 | >= v1.32.2 | >= v1.32.2 | v3.14.2 | >= v0.27.4 | Memory >= 12 GB, CPU >= 6 cores | 2026-02-12 | 2026-05-12 |

| 0.55.0 | >= 22.0.0 (lts/jod) | >= v0.29.0 | v0.60.2 | v0.68.7-rc.1 | >= v1.32.2 | >= v1.32.2 | v3.14.2 | >= v0.27.4 | Memory >= 12 GB, CPU >= 6 cores | 2026-02-05 | 2026-03-05 |

| 0.54.0 (LTS) | >= 22.0.0 (lts/jod) | >= v0.29.0 | v0.59.0 | v0.68.6+ | >= v1.32.2 | >= v1.32.2 | v3.14.2 | >= v0.27.4 | Memory >= 12 GB, CPU >= 6 cores | 2026-01-27 | 2026-04-27 |

| 0.52.0 (LTS) | >= 22.0.0 (lts/jod) | >= v0.26.0 | v0.58.1 | v0.67.2+ | >= v1.27.3 | >= v1.27.3 | v3.14.2 | >= v0.27.4 | Memory >= 12 GB, CPU >= 6 cores | 2025-12-11 | 2026-03-11 |

For a list of legacy releases, see the legacy versions documentation.

Troubleshooting Installation

If you experience issues installing or upgrading Solo (for example, conflicts with a previous installation), you may need to clean up your environment first.

Warning: The commands below will delete Solo-managed Kind clusters and remove your Solo home directory (

~/.solo).

# Delete only Solo-managed Kind clusters (names starting with "solo")

kind get clusters | grep '^solo' | while read cluster; do

kind delete cluster -n "$cluster"

done

# Remove Solo configuration and cache

rm -rf ~/.solo

After cleaning up, retry the installation with:

brew install hiero-ledger/tools/solo

1.2 - Quickstart

Overview

Solo Quickstart provides a single, one-shot command path to deploy a running Hiero test network using the Solo CLI tool. This guide covers installing Solo, running the one-shot deployment, verifying the network, and accessing local service endpoints.

Note: This guide assumes basic familiarity with command-line interfaces and Docker.

Prerequisites

Before you begin, ensure you have completed the following:

- System Readiness:

- Prepare your local environment (Docker, Kind, Kubernetes, and related tooling) by following the System Readiness guide.

Note: Quickstart only covers what you need to run

solo one-shot single deployand verify that the network is working. Detailed version requirements, OS-specific notes, and optional tools are documented in System Readiness.

Install Solo CLI

Install the latest Solo CLI globally using one of the following methods:

Homebrew (recommended for macOS/Linux/WSL2):

brew install hiero-ledger/tools/solonpm (alternatively, install Solo via npm):

npm install -g @hashgraph/solo@latest

Verify the installation

Confirm that Solo is installed and available on your PATH:

solo --version

Expected output (version may be different):

** Solo **

Version : 0.59.1

**

If you see a similar banner with a valid Solo version (for example, 0.59.1), your installation is successful.

Deploy a local network (one-shot)

Use the one-shot command to create and configure a fully functional local Hiero network:

solo one-shot single deploy

This command performs the following actions:

- Creates or connects to a local Kubernetes cluster using Kind.

- Deploys the Solo network components.

- Sets up and funds default test accounts.

- Exposes gRPC and JSON-RPC endpoints for client access.

What gets deployed

| Component | Description |

|---|---|

| Consensus Node | Hiero consensus node for processing transactions. |

| Mirror Node | Stores and serves historical transaction data. |

| Explorer UI | Web interface for viewing accounts and transactions. |

| JSON RPC Relay | Ethereum-compatible JSON RPC interface. |

Multiple Node Deployment - for testing consensus scenarios

To deploy multiple consensus nodes, pass the --num-consensus-nodes flag:

solo one-shot multiple deploy --num-consensus-nodes 3

This deploys 3 consensus nodes along with the same components as the single-node setup (mirror node, explorer, relay).

Note: Multiple node deployments require more resources. Ensure you have at least 16 GB of memory and 8 CPU cores allocated to Docker before running this command. See System Readiness for the full multi-node requirements.

When finished, destroy the network as usual:

solo one-shot multiple destroy

Verify the network

After the one-shot deployment completes, verify that the Kubernetes workloads are healthy.

You can monitor the Kubernetes workloads with standard tools:

kubectl get pods -A | grep -v kube-system

Confirm that all Solo-related pods are in a Running or Completed state.

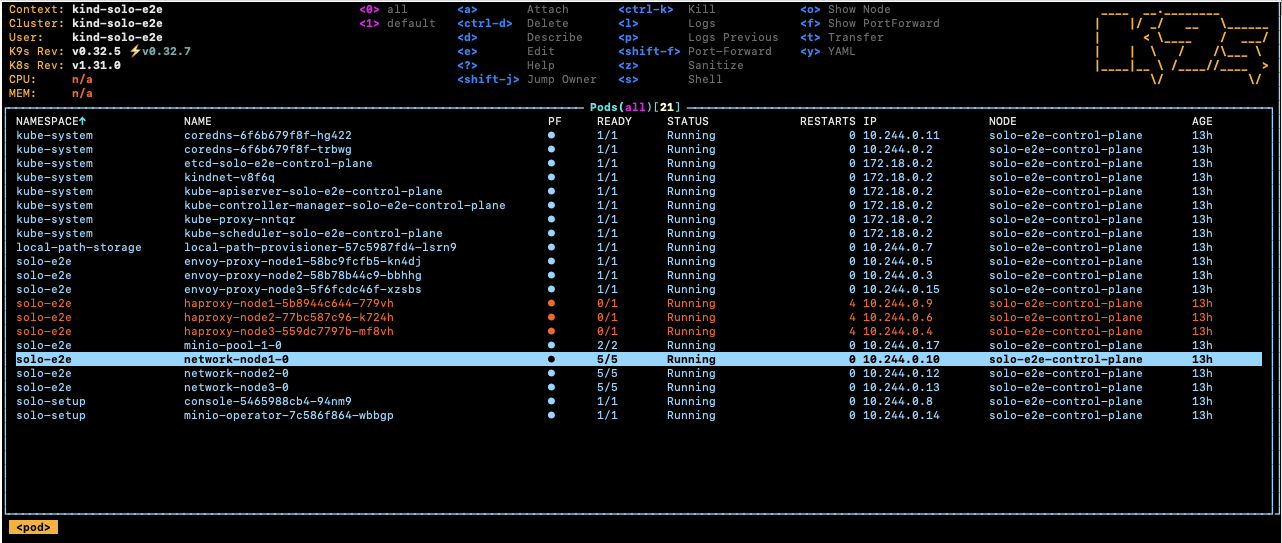

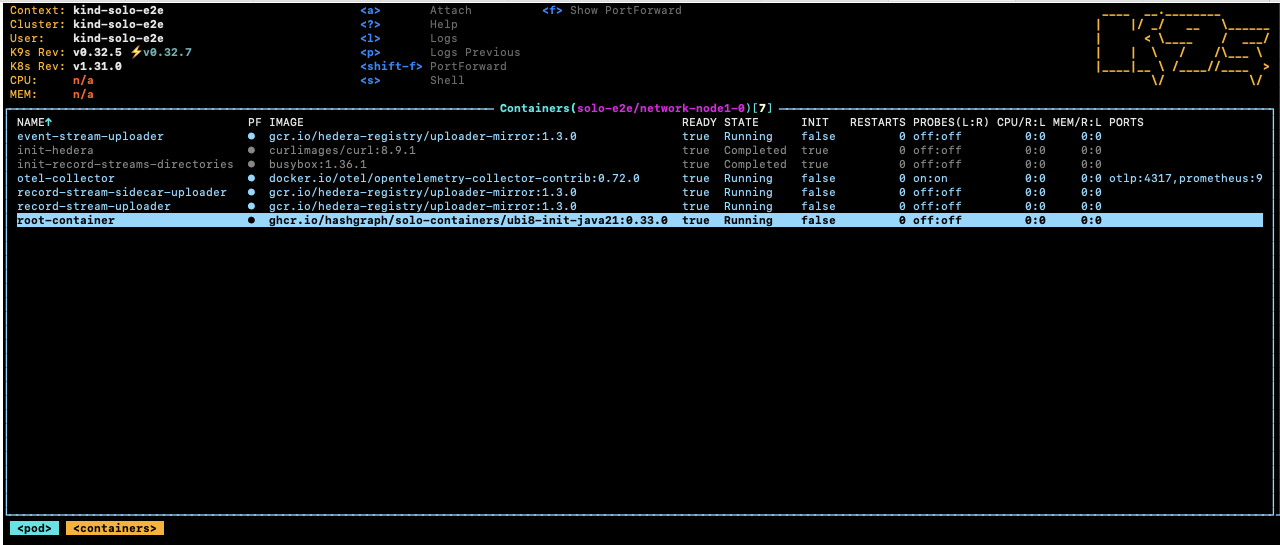

Tip: The Solo testing team recommends k9s for managing Kubernetes clusters. It provides a terminal-based UI that makes it easy to view pods, logs, and cluster status. Install it with

brew install k9sand runk9sto launch.

Access your local network

After the one-shot deployment completes and all pods are running, your local services are available at the following endpoints:

| Service | Endpoint | Description | Verfication |

|---|---|---|---|

| Explorer UI | http://localhost:38080 | Web UI for inspecting the network. | Open URL in your broswer to view the network explorer |

| Consensus node (gRPC) | localhost:35211 | gRPC endpoint for transactions. | nc -zv localhost 35211 |

| Mirror node REST API | http://localhost:38081 | REST API for queries. | http://localhost:38081/api/v1/transactions |

| JSON RPC relay | localhost:37546 | Ethereum-compatible JSON RPC endpoint. | curl -X POST http://localhost:37546 -H ‘Content-Type: application/json’ |

1.3 - Managing Your Network

Overview

This guide covers day-to-day management operations for a running Solo network, including starting, stopping, and restarting nodes, capturing logs, and upgrading the network.

Prerequisites

Before proceeding, ensure you have completed the following:

- System Readiness - your local environment meets all hardware and software requirements.

- Quickstart - you have a running Solo network deployed using

solo one-shot single deploy.

Find Your Deployment Name

Most management commands require your deployment name. Run the following command to retrieve it:

cat ~/.solo/cache/last-one-shot-deployment.txt

Expected output:

solo-deployment-<hash>

Use the value returned from this command as <deployment-name> in all commands on this page.

Stopping and Starting Nodes

Stop all nodes

Use this command to pause all consensus nodes without destroying the deployment:

solo consensus node stop --deployment <deployment-name>

Start nodes

Use this command to bring stopped nodes back online:

solo consensus node start --deployment <deployment-name>

Restart nodes

Use this command to stop and start all nodes in a single operation:

solo consensus node restart --deployment <deployment-name>

To verify pod status after any of the above commands, see Verify the network in the Quickstart guide.

Viewing Logs

To capture logs and diagnostic information for your deployment:

solo deployment diagnostics all --deployment <deployment-name>

Logs are saved to ~/.solo/logs/.

Expected output:

******************************* Solo *********************************************

Version : 0.59.1

Kubernetes Context : kind-solo

Kubernetes Cluster : kind-solo

Current Command : deployment diagnostics all --deployment <deployment-name>

**********************************************************************************

✔ Initialize [0.3s]

✔ Get consensus node logs and configs [15s]

✔ Get Helm chart values from all releases [2s]

✔ Downloaded logs from 10 Hiero component pods [1s]

✔ Get node states [10s]

Configurations and logs saved to /Users/<username>/.solo/logs

Log zip file network-node1-0-log-config.zip downloaded to /Users/<username>/.solo/logs/<deployment-name>

Helm chart values saved to /Users/<username>/.solo/logs/helm-chart-values

You can also retrieve logs for a specific pod directly using kubectl:

kubectl logs -n <namespace> <pod-name>

Replace

kubectl get pods -A | grep -v kube-system

Updating the Network

To update your consensus nodes to a new Hiero version:

solo consensus network upgrade --deployment <deployment-name> --upgrade-version <version>

Replace

Note: Check the Version Compatibility Reference in the System Readiness guide to confirm the Hiero version supported by your current Solo release before upgrading.

1.4 - Cleanup

Overview

This guide covers how to tear down a Solo network deployment, understand resource usage, and perform a full reset when needed.

Prerequisites

Before proceeding, ensure you have completed the following:

- Quickstart — you have a running Solo network deployed using

solo one-shot single deploy.

Destroying Your Network

Important: Always destroy your network before deploying a new one to avoid conflicts and errors.

To remove your Solo network:

solo one-shot single destroy

This command performs the following actions:

- Removes all deployed pods and services in the Solo namespace..

- Cleans up the Kubernetes namespace, which also removes associated PVCs when namespace deletion completes successfully.

- Deletes the Kind cluster.

- Updates Solo’s internal state.

Note:

solo one-shot single destroydoes not delete the underlying Kind cluster. If you created a Solo network on a local Kind cluster, the cluster remains until you delete it manually.

Failure modes and rerunning destroy

If solo one-shot single destroy fails part-way through (for example, due to an earlier deploy error), some resources may remain:

- The Solo namespace or one or more PVCs may not be deleted, which can leave Docker volumes appearing as “in use”.

- The destroy commands are designed to be idempotent, so you can safely rerun

solo one-shot single destroyto complete cleanup.

If rerunning destroy does not release the resources, use the Full Reset procedure below to force a clean state.

Resource Usage

Solo deploys a fully functioning mirror node that stores the transaction history generated by your local test network. During active testing, the mirror node’s resource consumption will grow as it processes more transactions. If you notice increasing resource usage, destroy and redeploy the network to reset it to a clean state.

Full Reset

Warning: This is a last resort procedure. Only use the Full Reset if

solo one-shot single destroyfails or your Solo state is corrupted. For normal teardown, always usesolo one-shot single destroyinstead.

# Delete only Solo-managed Kind clusters (names starting with "solo")

kind get clusters | grep '^solo' | while read cluster; do

kind delete cluster -n "$cluster"

done

# Remove Solo configuration and cache

rm -rf ~/.solo

Warning: The commands above will delete all Solo-managed Kind clusters and remove your Solo home directory (

~/.solo). Always use thegrep '^solo'filter when listing clusters - omitting it will delete every Kind cluster on your machine, including any unrelated to Solo.

After deleting the Kind cluster, Kubernetes resources (including namespaces and PVCs) and their associated volumes should be released. If Docker still reports unused volumes that you want to remove, you can optionally run:

# Optional: remove all unused Docker volumes

docker volume prune

Warning:

docker volume pruneremoves all unused Docker volumes on your machine, not just those created by Solo. Only run this command if you understand its impact.

- To redeploy after a full reset, follow the Quickstart guide.

2 - Advanced Solo Setup

2.1 - Using Environment Variables

Overview

Solo supports a set of environment variables that let you customize its behaviour without modifying command-line flags on every run. Variables set in your shell environment take effect automatically for all subsequent Solo commands.

Tip: Add frequently used variables to your shell profile (e.g.

~/.zshrcor~/.bashrc) to persist them across sessions.

General

| Environment Variable | Description | Default Value |

|---|---|---|

SOLO_HOME | Path to the Solo cache and log files | ~/.solo |

SOLO_CACHE_DIR | Path to the Solo cache directory | ~/.solo/cache |

SOLO_LOG_LEVEL | Logging level for Solo operations. Accepted values: trace, debug, info, warn, error | info |

SOLO_DEV_OUTPUT | Treat all commands as if the --dev flag were specified | false |

SOLO_CHAIN_ID | Chain ID of the Solo network | 298 |

FORCE_PODMAN | Force the use of Podman as the container engine when creating a new local cluster. Accepted values: true, false | false |

Network and Node Identity

| Environment Variable | Description | Default Value |

|---|---|---|

DEFAULT_START_ID_NUMBER | First node account ID of the Solo test network | 0.0.3 |

SOLO_NODE_INTERNAL_GOSSIP_PORT | Internal gossip port used by the Hiero network | 50111 |

SOLO_NODE_EXTERNAL_GOSSIP_PORT | External gossip port used by the Hiero network | 50111 |

SOLO_NODE_DEFAULT_STAKE_AMOUNT | Default stake amount for a node | 500 |

GRPC_PORT | gRPC port used for local node communication | 50211 |

LOCAL_NODE_START_PORT | Local node start port for the Solo network | 30212 |

SOLO_CHAIN_ID | Chain ID of the Solo network | 298 |

Operator and Key Configuration

| Environment Variable | Description | Default Value |

|---|---|---|

SOLO_OPERATOR_ID | Operator account ID for the Solo network | 0.0.2 |

SOLO_OPERATOR_KEY | Operator private key for the Solo network | 302e020100... |

SOLO_OPERATOR_PUBLIC_KEY | Operator public key for the Solo network | 302a300506... |

FREEZE_ADMIN_ACCOUNT | Freeze admin account ID for the Solo network | 0.0.58 |

GENESIS_KEY | Genesis private key for the Solo network | 302e020100... |

Note: Full key values are omitted above for readability. Refer to the source defaults for complete key strings.

Node Client Behaviour

| Environment Variable | Description | Default Value |

|---|---|---|

NODE_CLIENT_MIN_BACKOFF | Minimum wait time between retries, in milliseconds | 1000 |

NODE_CLIENT_MAX_BACKOFF | Maximum wait time between retries, in milliseconds | 1000 |

NODE_CLIENT_REQUEST_TIMEOUT | Time a transaction or query retries on a “busy” network response, in milliseconds | 600000 |

NODE_CLIENT_MAX_ATTEMPTS | Maximum number of attempts for node client operations | 600 |

NODE_CLIENT_PING_INTERVAL | Interval between node health pings, in milliseconds | 30000 |

NODE_CLIENT_SDK_PING_MAX_RETRIES | Maximum number of retries for node health pings | 5 |

NODE_CLIENT_SDK_PING_RETRY_INTERVAL | Interval between node health ping retries, in milliseconds | 10000 |

NODE_COPY_CONCURRENT | Number of concurrent threads used when copying files to a node | 4 |

LOCAL_BUILD_COPY_RETRY | Number of retries for local build copy operations | 3 |

ACCOUNT_UPDATE_BATCH_SIZE | Number of accounts to update in a single batch operation | 10 |

Pod and Network Readiness

| Environment Variable | Description | Default Value |

|---|---|---|

PODS_RUNNING_MAX_ATTEMPTS | Maximum number of attempts to check if pods are running | 900 |

PODS_RUNNING_DELAY | Interval between pod running checks, in milliseconds | 1000 |

PODS_READY_MAX_ATTEMPTS | Maximum number of attempts to check if pods are ready | 300 |

PODS_READY_DELAY | Interval between pod ready checks, in milliseconds | 2000 |

NETWORK_NODE_ACTIVE_MAX_ATTEMPTS | Maximum number of attempts to check if network nodes are active | 300 |

NETWORK_NODE_ACTIVE_DELAY | Interval between network node active checks, in milliseconds | 1000 |

NETWORK_NODE_ACTIVE_TIMEOUT | Maximum wait time for network nodes to become active, in milliseconds | 1000 |

NETWORK_PROXY_MAX_ATTEMPTS | Maximum number of attempts to check if the network proxy is running | 300 |

NETWORK_PROXY_DELAY | Interval between network proxy checks, in milliseconds | 2000 |

NETWORK_DESTROY_WAIT_TIMEOUT | Maximum wait time for network teardown to complete, in milliseconds | 120 |

Block Node

| Environment Variable | Description | Default Value |

|---|---|---|

BLOCK_NODE_ACTIVE_MAX_ATTEMPTS | Maximum number of attempts to check if block nodes are active | 100 |

BLOCK_NODE_ACTIVE_DELAY | Interval between block node active checks, in milliseconds | 60 |

BLOCK_NODE_ACTIVE_TIMEOUT | Maximum wait time for block nodes to become active, in milliseconds | 60 |

BLOCK_STREAM_STREAM_MODE | The blockStream.streamMode value in consensus node application properties. Only applies when a Block Node is deployed | BOTH |

BLOCK_STREAM_WRITER_MODE | The blockStream.writerMode value in consensus node application properties. Only applies when a Block Node is deployed | FILE_AND_GRPC |

Relay Node

| Environment Variable | Description | Default Value |

|---|---|---|

RELAY_PODS_RUNNING_MAX_ATTEMPTS | Maximum number of attempts to check if relay pods are running | 900 |

RELAY_PODS_RUNNING_DELAY | Interval between relay pod running checks, in milliseconds | 1000 |

RELAY_PODS_READY_MAX_ATTEMPTS | Maximum number of attempts to check if relay pods are ready | 100 |

RELAY_PODS_READY_DELAY | Interval between relay pod ready checks, in milliseconds | 1000 |

Load Balancer

| Environment Variable | Description | Default Value |

|---|---|---|

LOAD_BALANCER_CHECK_DELAY_SECS | Delay between load balancer status checks, in seconds | 5 |

LOAD_BALANCER_CHECK_MAX_ATTEMPTS | Maximum number of attempts to check load balancer status | 60 |

Lease Management

| Environment Variable | Description | Default Value |

|---|---|---|

SOLO_LEASE_ACQUIRE_ATTEMPTS | Number of attempts to acquire a lock before failing | 10 |

SOLO_LEASE_DURATION | Duration in seconds for which a lock is held before expiration | 20 |

Component Versions

| Environment Variable | Description | Default Value |

|---|---|---|

CONSENSUS_NODE_VERSION | Release version of the Consensus Node to use | v0.65.1 |

BLOCK_NODE_VERSION | Release version of the Block Node to use | v0.18.0 |

MIRROR_NODE_VERSION | Release version of the Mirror Node to use | v0.138.0 |

EXPLORER_VERSION | Release version of the Explorer to use | v25.1.1 |

RELAY_VERSION | Release version of the JSON-RPC Relay to use | v0.70.0 |

INGRESS_CONTROLLER_VERSION | Release version of the HAProxy Ingress Controller to use | v0.14.5 |

SOLO_CHART_VERSION | Release version of the Solo Helm charts to use | v0.56.0 |

MINIO_OPERATOR_VERSION | Release version of the MinIO Operator to use | 7.1.1 |

PROMETHEUS_STACK_VERSION | Release version of the Prometheus Stack to use | 52.0.1 |

GRAFANA_AGENT_VERSION | Release version of the Grafana Agent to use | 0.27.1 |

Helm Chart URLs

| Environment Variable | Description | Default Value |

|---|---|---|

JSON_RPC_RELAY_CHART_URL | Helm chart repository URL for the JSON-RPC Relay | https://hiero-ledger.github.io/hiero-json-rpc-relay/charts |

MIRROR_NODE_CHART_URL | Helm chart repository URL for the Mirror Node | https://hashgraph.github.io/hedera-mirror-node/charts |

EXPLORER_CHART_URL | Helm chart repository URL for the Explorer | oci://ghcr.io/hiero-ledger/hiero-mirror-node-explorer/hiero-explorer-chart |

INGRESS_CONTROLLER_CHART_URL | Helm chart repository URL for the ingress controller | https://haproxy-ingress.github.io/charts |

PROMETHEUS_OPERATOR_CRDS_CHART_URL | Helm chart repository URL for the Prometheus Operator CRDs | https://prometheus-community.github.io/helm-charts |

NETWORK_LOAD_GENERATOR_CHART_URL | Helm chart repository URL for the Network Load Generator | oci://swirldslabs.jfrog.io/load-generator-helm-release-local |

Network Load Generator

| Environment Variable | Description | Default Value |

|---|---|---|

NETWORK_LOAD_GENERATOR_CHART_VERSION | Release version of the Network Load Generator Helm chart to use | v0.7.0 |

NETWORK_LOAD_GENERATOR_PODS_RUNNING_MAX_ATTEMPTS | Maximum number of attempts to check if Network Load Generator pods are running | 900 |

NETWORK_LOAD_GENERATOR_POD_RUNNING_DELAY | Interval between Network Load Generator pod running checks, in milliseconds | 1000 |

One-Shot Deployment

| Environment Variable | Description | Default Value |

|---|---|---|

ONE_SHOT_WITH_BLOCK_NODE | Deploy Block Node as part of a one-shot deployment | false |

MIRROR_NODE_PINGER_TPS | Transactions per second for the Mirror Node monitor pinger. Set to 0 to disable | 5 |

2.2 - Network Deployments

2.2.1 - One-shot Falcon Deployment

Overview

One-shot Falcon deployment is Solo’s YAML-driven one-shot workflow. It uses the same core

deployment pipeline as solo one-shot single deploy, but lets you inject

component-specific flags through a single values file.

One-shot use Falcon deployment when you need a repeatable advanced setup, want to check a complete deployment into source control, or need to customise component flags without running every Solo command manually.

Falcon is especially useful for:

- CI/CD pipelines and automated test environments.

- Reproducible local developer setups.

- Advanced deployments that need custom chart paths, image versions, ingress, storage, TLS, or node startup options.

Important: Falcon is an orchestration layer over Solo’s standard commands. It does not introduce a separate deployment model. Solo still creates a deployment, attaches clusters, deploys the network, configures nodes, and then adds optional components such as mirror node, explorer, and relay.

Prerequisites

Before proceeding, ensure you have completed the following:

System Readiness -your local environment meets the hardware and software requirements for Solo, Kubernetes, Docker, Kind, kubectl, and Helm.

Quickstart -you are already familiar with the standard one-shot deployment workflow.

Set your environment variables if you have not already done so:

export SOLO_CLUSTER_NAME=solo export SOLO_NAMESPACE=solo export SOLO_CLUSTER_SETUP_NAMESPACE=solo-cluster export SOLO_DEPLOYMENT=solo-deployment

How Falcon Works

When you run Falcon deployment, Solo executes the same end-to-end deployment sequence used by its one-shot workflows:

- Connect to the Kubernetes cluster.

- Create a deployment and attach the cluster reference.

- Set up shared cluster components.

- Generate gossip and TLS keys.

- Deploy the consensus network and, if enabled, the block node (in parallel).

- Set up and start consensus nodes.

- Optionally, deploy mirror node, explorer, and relay in parallel for faster startup.

- Create predefined test accounts.

- Write deployment notes, versions, port-forward details, and account data to a local output directory.

The difference is that Falcon reads a YAML file and maps its top-level sections to the underlying Solo subcommands.

| Values file section | Solo subcommand invoked |

|---|---|

network | solo consensus network deploy |

setup | solo consensus node setup |

consensusNode | solo consensus node start |

mirrorNode | solo mirror node add |

explorerNode | solo explorer node add |

relayNode | solo relay node add |

blockNode | solo block node add (when ONE_SHOT_WITH_BLOCK_NODE=true) |

For the full list of supported CLI flags per section, see the Falcon Values File Reference.

Create a Falcon Values File

Create a YAML file to control every component of your Solo deployment. The file can have any name -falcon-values.yaml is used throughout this guide as a convention.

Note: Keys within each section must be the full CLI flag name including the

--prefix - for example,--release-tag, notrelease-tagor-r. Any section you omit from the file is skipped, and Solo uses the built-in defaults for that component.

Example: Single-Node Falcon Deployment

The following falcon-values.yaml example deploys a standard single-node network with mirror node,

explorer, and relay enabled:

network:

--release-tag: "v0.71.0"

--pvcs: false

setup:

--release-tag: "v0.71.0"

consensusNode:

--force-port-forward: true

mirrorNode:

--enable-ingress: true

--pinger: true

--force-port-forward: true

explorerNode:

--enable-ingress: true

--force-port-forward: true

relayNode:

--node-aliases: "node1"

--force-port-forward: true

Deploy with Falcon one-shot

Run Falcon deployment by pointing Solo at the values file:

solo one-shot falcon deploy --values-file falcon-values.yaml

Solo creates a one-shot deployment, applies the values from the YAML file to the appropriate subcommands, and then deploys the full environment.

What Falcon Does Not Read from the File

Some Falcon settings are controlled directly by the top-level command flags, not by section entries in the values file:

--values-fileselects the YAML file to load.--deploy-mirror-node,--deploy-explorer, and--deploy-relaycontrol whether those optional components are deployed at all.--deployment,--namespace,--cluster-ref, and--num-consensus-nodesare top-level one-shot inputs.

Important: Do not rely on

--deploymentinsidefalcon-values.yaml. Solo intentionally ignores--deploymentvalues from section content during Falcon argument expansion. Set the deployment name on the command line if you need a specific name.

Tip: When not specified, Falcon uses these defaults:

--deployment one-shot,--namespace one-shot,--cluster-ref one-shot, and--num-consensus-nodes 1. Pass any of these explicitly on the command line to override them.

Example:

solo one-shot falcon deploy \

--deployment falcon-demo \

--cluster-ref one-shot \

--values-file falcon-values.yaml

Multi-Node Falcon Deployment

For multiple consensus nodes, set the node count on the Falcon command and then provide matching per-node settings where required.

Example:

solo one-shot falcon deploy \ --deployment falcon-multi \ --num-consensus-nodes 3 \ --values-file falcon-values.yamlExample multi-node values file:

network: --release-tag: "v0.71.0" --pvcs: true setup: --release-tag: "v0.71.0" consensusNode: --force-port-forward: true --stake-amounts: "100,100,100" mirrorNode: --enable-ingress: true --pinger: true explorerNode: --enable-ingress: true relayNode: --node-aliases: "node1,node2,node3"The

--node-aliasesvalue in therelayNodesection must match the node aliases generated by--num-consensus-nodes. Nodes are auto-namednode1,node2,node3, and so on. Setting this to onlynode1is valid if you want the relay to serve a single node, but specifying all aliases is typical for full coverage.Use this pattern when you need a repeatable multi-node deployment but do not want to manage each step manually.

Note: Multi-node deployments require more host resources than single-node deployments. Follow the resource guidance in System Readiness, and increase Docker memory and CPU allocation before deploying.

(Optional) Component Toggles

Falcon can skip optional components at the command line without requiring a second YAML file.

For example, to deploy only the consensus network and mirror node:

solo one-shot falcon deploy \

--values-file falcon-values.yaml \

--deploy-explorer=false \

--deploy-relay=false

Available toggles and their defaults:

| Flag | Default | Description |

|---|---|---|

--deploy-mirror-node | true | Include the mirror node in the deployment. |

--deploy-explorer | true | Include the explorer in the deployment. |

--deploy-relay | true | Include the JSON RPC relay in the deployment. |

Important: The explorer and relay both depend on the mirror node. Setting

--deploy-mirror-node=falsewhile keeping--deploy-explorer=trueor--deploy-relay=trueis not a supported configuration and will result in a failed deployment.

This is useful when you want to:

- Reduce resource usage in CI jobs.

- Isolate one component during testing.

- Reuse the same YAML file across multiple deployment profiles.

Common Falcon Customisations

Because each YAML section maps directly to the corresponding Solo subcommand, you can use Falcon to centralise advanced options such as:

- Custom release tags for the consensus node platform.

- Local chart directories for mirror node, relay, explorer, or block node.

- Local consensus node build paths for development workflows.

- Ingress and domain settings.

- Mirror node external database settings.

- Node startup settings such as state files, port forwarding, and stake amounts.

- Storage backends and credentials for stream file handling.

Example: Local Development with Local Chart Directories

setup:

--local-build-path: "/path/to/hiero-consensus-node/hedera-node/data"

mirrorNode:

--mirror-node-chart-dir: "/path/to/hiero-mirror-node/charts"

relayNode:

--relay-chart-dir: "/path/to/hiero-json-rpc-relay/charts"

explorerNode:

--explorer-chart-dir: "/path/to/hiero-mirror-node-explorer/charts"

This pattern is useful for local integration testing against unpublished component builds.

Falcon with Block Node

Falcon can also include block node configuration.

Note: Block node workflows are advanced and require higher resource allocation and version compatibility across consensus node, block node, and related components. Docker memory must be set to at least 16 GB before deploying with block node enabled.

Block node support also requires the

ONE_SHOT_WITH_BLOCK_NODE=trueenvironment variable to be set before runningfalcon deploy. Without it, Solo skips the block node add step even if ablockNodesection is present in the values file.

Block node deployment is subject to version compatibility requirements. Minimum versions are consensus node ≥ v0.72.0 and block node ≥ 0.29.0. Mixing incompatible versions will cause the deployment to fail. Check the Version Compatibility Reference before enabling block node.

Example:

network:

--release-tag: "v0.72.0"

setup:

--release-tag: "v0.72.0"

consensusNode:

--force-port-forward: true

blockNode:

--release-tag: "v0.29.0"

--enable-ingress: false

mirrorNode:

--enable-ingress: true

--pinger: true

explorerNode:

--enable-ingress: true

relayNode:

--node-aliases: "node1"

--force-port-forward: true

Use block node settings only when your target Solo and component versions are known to be compatible.

Rollback and Failure Behaviour

Falcon deployment enables automatic rollback by default.

If deployment fails after resources have already been created, Solo attempts to destroy the one-shot deployment automatically and clean up the namespace.

If you want to preserve the failed deployment for debugging, disable rollback:

solo one-shot falcon deploy \

--values-file falcon-values.yaml \

--no-rollback

Use --no-rollback only when you explicitly want to inspect partial resources,

logs, or Kubernetes objects after a failed run.

Deployment Output

After a successful Falcon deployment, Solo writes deployment metadata to

~/.solo/one-shot-<deployment>/ where <deployment> is the value of the

--deployment flag (default: one-shot).

This directory typically contains:

notes- human-readable deployment summaryversions- component versions recorded at deploy timeforwards- port-forward configurationaccounts.json- predefined test account keys and IDs. All accounts are ECDSA Alias accounts (EVM-compatible) and include apublicAddressfield. The file also includes the system operator account.

This makes Falcon especially useful for automation, because the deployment artifacts are written to a predictable path after each run.

To inspect the latest one-shot deployment metadata later, run:

solo one-shot show deployment

If port-forwards are interrupted after deployment - for example after a system restart or network disruption - restore them without redeploying:

solo deployment refresh port-forwards

Destroy a Falcon Deployment

Destroy the Falcon deployment with:

solo one-shot falcon destroySolo removes deployed extensions first, then destroys the mirror node, network, cluster references, and local deployment metadata.

If multiple deployments exist locally, Solo prompts you to choose which one to destroy unless you pass

--deploymentexplicitly.solo one-shot falcon destroy --deployment falcon-demo

When to Use Falcon vs. Manual Deployment

Use Falcon deployment when you want a single, repeatable command backed by a versioned YAML file.

Use Step-by-Step Manual Deployment when you need to pause between steps, inspect intermediate state, or debug a specific deployment phase in isolation.

In practice:

- Falcon is better for automation and repeatability.

- Manual deployment is better for debugging and low-level control.

Reference

- Falcon Values File Reference - full list of supported CLI flags, types, and defaults for every section.

- Upstream example values file - working reference from the Solo repository.

Tip: If you are creating a values file for the first time, start from the annotated template in the Solo repository rather than writing one from scratch:

examples/one-shot-falcon/falcon-values.yamlThis file includes all supported sections and flags with inline comments explaining each option. Copy it, remove what you do not need, and adjust the values for your environment.

2.2.2 - Falcon Values File Reference

Overview

This page catalogs the Solo CLI flags accepted under each top-level section of a Falcon values file. Each entry corresponds to the command-line flag that the underlying Solo subcommand accepts.

Sections map to subcommands as follows:

| Section | Solo subcommand |

|---|---|

network | solo consensus network deploy |

setup | solo consensus node setup |

consensusNode | solo consensus node start |

mirrorNode | solo mirror node add |

explorerNode | solo explorer node add |

relayNode | solo relay node add |

blockNode | solo block node add |

All flag names must be written in long form with double dashes (for example,

--release-tag). Flags left empty ("") or matching their default value are

ignored by Solo at argument expansion time.

Note: Not every flag listed here is relevant to every deployment. Use this page as a lookup when writing or debugging a values file. For a working example file, see the upstream reference at https://github.com/hiero-ledger/solo/tree/main/examples/one-shot-falcon.

Consensus Network Deploy — network

Flags passed to solo consensus network deploy.

| Flag | Type | Default | Description |

|---|---|---|---|

--release-tag | string | current Hedera platform version | Consensus node release tag (e.g. v0.71.0). |

--pvcs | boolean | false | Enable Persistent Volume Claims for consensus node storage. Required for node add operations. |

--load-balancer | boolean | false | Enable load balancer for network node proxies. |

--chart-dir | string | — | Path to a local Helm chart directory for the Solo network chart. |

--solo-chart-version | string | current chart version | Specific Solo testing chart version to deploy. |

--haproxy-ips | string | — | Static IP mapping for HAProxy pods (e.g. node1=127.0.0.1,node2=127.0.0.2). |

--envoy-ips | string | — | Static IP mapping for Envoy proxy pods. |

--debug-node-alias | string | — | Enable the default JVM debug port (5005) for the specified node alias. |

--domain-names | string | — | Custom domain name mapping per node alias (e.g. node1=node1.example.com). |

--grpc-tls-cert | string | — | TLS certificate path for gRPC, per node alias (e.g. node1=/path/to/cert). |

--grpc-web-tls-cert | string | — | TLS certificate path for gRPC Web, per node alias. |

--grpc-tls-key | string | — | TLS certificate key path for gRPC, per node alias. |

--grpc-web-tls-key | string | — | TLS certificate key path for gRPC Web, per node alias. |

--storage-type | string | minio_only | Stream file storage backend. Options: minio_only, aws_only, gcs_only, aws_and_gcs. |

--gcs-write-access-key | string | — | GCS write access key. |

--gcs-write-secrets | string | — | GCS write secret key. |

--gcs-endpoint | string | — | GCS storage endpoint URL. |

--gcs-bucket | string | — | GCS bucket name. |

--gcs-bucket-prefix | string | — | GCS bucket path prefix. |

--aws-write-access-key | string | — | AWS write access key. |

--aws-write-secrets | string | — | AWS write secret key. |

--aws-endpoint | string | — | AWS storage endpoint URL. |

--aws-bucket | string | — | AWS bucket name. |

--aws-bucket-region | string | — | AWS bucket region. |

--aws-bucket-prefix | string | — | AWS bucket path prefix. |

--settings-txt | string | template | Path to a custom settings.txt file for consensus nodes. |

--application-properties | string | template | Path to a custom application.properties file. |

--application-env | string | template | Path to a custom application.env file. |

--api-permission-properties | string | template | Path to a custom api-permission.properties file. |

--bootstrap-properties | string | template | Path to a custom bootstrap.properties file. |

--log4j2-xml | string | template | Path to a custom log4j2.xml file. |

--genesis-throttles-file | string | — | Path to a custom throttles.json file for network genesis. |

--service-monitor | boolean | false | Install a ServiceMonitor custom resource for Prometheus metrics. |

--pod-log | boolean | false | Install a PodLog custom resource for node pod log monitoring. |

--quiet-mode | boolean | false | Suppress confirmation prompts. |

--values-file | string | — | Comma-separated Helm chart values file paths (not the Falcon values file). |

Consensus Node Setup — setup

Flags passed to solo consensus node setup.

| Flag | Type | Default | Description |

|---|---|---|---|

--release-tag | string | current Hedera platform version | Consensus node release tag. Must match network.--release-tag. |

--local-build-path | string | — | Path to a local Hiero consensus node build (e.g. ~/hiero-consensus-node/hedera-node/data). Used for local development workflows. |

--app | string | HederaNode.jar | Name of the consensus node application binary. |

--app-config | string | — | Path to a JSON configuration file for the testing app. |

--admin-public-keys | string | — | Comma-separated DER-encoded ED25519 public keys in node alias order. |

--domain-names | string | — | Custom domain name mapping per node alias. |

--dev | boolean | false | Enable developer mode. |

--quiet-mode | boolean | false | Suppress confirmation prompts. |

--cache-dir | string | ~/.solo/cache | Local cache directory for downloaded artifacts. |

Consensus Node Start — consensusNode

Flags passed to solo consensus node start.

| Flag | Type | Default | Description |

|---|---|---|---|

--force-port-forward | boolean | true | Force port forwarding to access network services locally. |

--stake-amounts | string | — | Comma-separated stake amounts in node alias order (e.g. 100,100,100). Required for multi-node deployments that need non-default stakes. |

--state-file | string | — | Path to a zipped state file to restore the network from. |

--debug-node-alias | string | — | Enable JVM debug port (5005) for the specified node alias. |

--app | string | HederaNode.jar | Name of the consensus node application binary. |

--quiet-mode | boolean | false | Suppress confirmation prompts. |

Mirror Node Add — mirrorNode

Flags passed to solo mirror node add.

| Flag | Type | Default | Description |

|---|---|---|---|

--mirror-node-version | string | current version | Mirror node Helm chart version to deploy. |

--enable-ingress | boolean | false | Deploy an ingress controller for the mirror node. |

--force-port-forward | boolean | true | Enable port forwarding for mirror node services. |

--pinger | boolean | false | Enable the mirror node Pinger service. |

--mirror-static-ip | string | — | Static IP address for the mirror node load balancer. |

--domain-name | string | — | Custom domain name for the mirror node. |

--ingress-controller-value-file | string | — | Path to a Helm values file for the ingress controller. |

--mirror-node-chart-dir | string | — | Path to a local mirror node Helm chart directory. |

--use-external-database | boolean | false | Connect to an external PostgreSQL database instead of the chart-bundled one. |

--external-database-host | string | — | Hostname of the external database. Requires --use-external-database. |

--external-database-owner-username | string | — | Owner username for the external database. |

--external-database-owner-password | string | — | Owner password for the external database. |

--external-database-read-username | string | — | Read-only username for the external database. |

--external-database-read-password | string | — | Read-only password for the external database. |

--storage-type | string | minio_only | Stream file storage backend for the mirror node importer. |

--storage-read-access-key | string | — | Storage read access key for the mirror node importer. |

--storage-read-secrets | string | — | Storage read secret key for the mirror node importer. |

--storage-endpoint | string | — | Storage endpoint URL for the mirror node importer. |

--storage-bucket | string | — | Storage bucket name for the mirror node importer. |

--storage-bucket-prefix | string | — | Storage bucket path prefix. |

--storage-bucket-region | string | — | Storage bucket region. |

--operator-id | string | — | Operator account ID for the mirror node. |

--operator-key | string | — | Operator private key for the mirror node. |

--quiet-mode | boolean | false | Suppress confirmation prompts. |

--values-file | string | — | Comma-separated Helm chart values file paths for the mirror node chart. |

Explorer Add — explorerNode

Flags passed to solo explorer node add.

| Flag | Type | Default | Description |

|---|---|---|---|

--explorer-version | string | current version | Hiero Explorer Helm chart version to deploy. |

--enable-ingress | boolean | false | Deploy an ingress controller for the explorer. |

--force-port-forward | boolean | true | Enable port forwarding for the explorer service. |

--domain-name | string | — | Custom domain name for the explorer. |

--ingress-controller-value-file | string | — | Path to a Helm values file for the ingress controller. |

--explorer-chart-dir | string | — | Path to a local Hiero Explorer Helm chart directory. |

--explorer-static-ip | string | — | Static IP address for the explorer load balancer. |

--enable-explorer-tls | boolean | false | Enable TLS for the explorer. Requires cert-manager. |

--explorer-tls-host-name | string | explorer.solo.local | Hostname used for the explorer TLS certificate. |

--tls-cluster-issuer-type | string | self-signed | TLS cluster issuer type. Options: self-signed, acme-staging, acme-prod. |

--mirror-node-id | number | — | ID of the mirror node instance to connect the explorer to. |

--mirror-namespace | string | — | Kubernetes namespace of the mirror node. |

--solo-chart-version | string | current version | Solo chart version used for explorer cluster setup. |

--quiet-mode | boolean | false | Suppress confirmation prompts. |

--values-file | string | — | Comma-separated Helm chart values file paths for the explorer chart. |

JSON-RPC Relay Add — relayNode

Flags passed to solo relay node add.

| Flag | Type | Default | Description |

|---|---|---|---|

--relay-release | string | current version | Hiero JSON-RPC Relay Helm chart release to deploy. |

--node-aliases | string | — | Comma-separated node aliases the relay will observe (e.g. node1 or node1,node2). |

--replica-count | number | 1 | Number of relay replicas to deploy. |

--chain-id | string | 298 | EVM chain ID exposed by the relay (Hedera testnet default). |

--force-port-forward | boolean | true | Enable port forwarding for the relay service. |

--domain-name | string | — | Custom domain name for the relay. |

--relay-chart-dir | string | — | Path to a local Hiero JSON-RPC Relay Helm chart directory. |

--operator-id | string | — | Operator account ID for relay transaction signing. |

--operator-key | string | — | Operator private key for relay transaction signing. |

--mirror-node-id | number | — | ID of the mirror node instance the relay will query. |

--mirror-namespace | string | — | Kubernetes namespace of the mirror node. |

--quiet-mode | boolean | false | Suppress confirmation prompts. |

--values-file | string | — | Comma-separated Helm chart values file paths for the relay chart. |

Block Node Add — blockNode

Flags passed to solo block node add.

Important: The

blockNodesection is only read whenONE_SHOT_WITH_BLOCK_NODE=trueis set in the environment. Otherwise Solo skips the block node add step regardless of whether ablockNodesection is present. Version requirements: Consensus node ≥ v0.72.0 and block node ≥ 0.29.0. Use--forceto bypass version gating during testing.

| Flag | Type | Default | Description |

|---|---|---|---|

--release-tag | string | current version | Hiero block node release tag. |

--image-tag | string | — | Docker image tag to override the Helm chart default. |

--enable-ingress | boolean | false | Deploy an ingress controller for the block node. |

--domain-name | string | — | Custom domain name for the block node. |

--dev | boolean | false | Enable developer mode for the block node. |

--block-node-chart-dir | string | — | Path to a local Hiero block node Helm chart directory. |

--quiet-mode | boolean | false | Suppress confirmation prompts. |

--values-file | string | — | Comma-separated Helm chart values file paths for the block node chart. |

Top-Level Falcon Command Flags

The following flags are passed directly on the solo one-shot falcon deploy command

line. They are not read from the values file sections.

| Flag | Type | Default | Description |

|---|---|---|---|

--values-file | string | — | Path to the Falcon values YAML file. |

--deployment | string | one-shot | Deployment name for Solo’s internal state. |

--namespace | string | one-shot | Kubernetes namespace to deploy into. |

--cluster-ref | string | one-shot | Cluster reference name. |

--num-consensus-nodes | number | 1 | Number of consensus nodes to deploy. |

--deploy-mirror-node | boolean | true | Deploy or skip the mirror node. |

--deploy-explorer | boolean | true | Deploy or skip the explorer. |

--deploy-relay | boolean | true | Deploy or skip the JSON-RPC relay. |

--no-rollback | boolean | false | Disable automatic cleanup on deployment failure. Preserves partial resources for inspection. |

--quiet-mode | boolean | false | Suppress all interactive prompts. |

--force | boolean | false | Force actions that would otherwise be skipped. |

2.2.3 - Step-by-Step Manual Deployment

Overview

Manual deployment lets you deploy each Solo network component individually, giving you full control over configuration, sequencing, and troubleshooting. Use this approach when you need to customise specific steps, debug a component in isolation, or integrate Solo into a bespoke automation pipeline.

Prerequisites

Before proceeding, ensure you have completed the following:

System Readiness — your local environment meets all hardware and software requirements (Docker, kind, kubectl, helm, Solo).

Quickstart — you have a running Kind cluster and have run

solo initat least once.Set your environment variables if you have not already done so:

export SOLO_CLUSTER_NAME=solo export SOLO_NAMESPACE=solo export SOLO_CLUSTER_SETUP_NAMESPACE=solo-cluster export SOLO_DEPLOYMENT=solo-deployment

Deployment Steps

1. Connect Cluster and Create Deployment

Connect Solo to the Kind cluster and create a new deployment configuration:

# Connect to the Kind cluster solo cluster-ref config connect \ --cluster-ref kind-${SOLO_CLUSTER_NAME} \ --context kind-${SOLO_CLUSTER_NAME} # Create a new deployment solo deployment config create \ -n "${SOLO_NAMESPACE}" \ --deployment "${SOLO_DEPLOYMENT}"Expected Output:

******************************* Solo ********************************************* Version : 0.63.0 Kubernetes Context : kind-solo Kubernetes Cluster : kind-solo Current Command : cluster-ref config connect --cluster-ref kind-solo --context kind-solo ********************************************************************************** Initialize ✔ Initialize Validating cluster ref: ✔ Validating cluster ref: kind-solo Test connection to cluster: ✔ Test connection to cluster: kind-solo Associate a context with a cluster reference: ✔ Associate a context with a cluster reference: kind-solo

2. Add Cluster to Deployment

Attach the cluster to your deployment and specify the number of consensus nodes:

1. Single node:

solo deployment cluster attach \ --deployment "${SOLO_DEPLOYMENT}" \ --cluster-ref kind-${SOLO_CLUSTER_NAME} \ --num-consensus-nodes 12. Multiple nodes (e.g., –num-consensus-nodes 3):

solo deployment cluster attach \ --deployment "${SOLO_DEPLOYMENT}" \ --cluster-ref kind-${SOLO_CLUSTER_NAME} \ --num-consensus-nodes 3Expected Output:

solo-deployment_ADD_CLUSTER_OUTPUT

3. Generate Keys

Generate the gossip and TLS keys for your consensus nodes:

solo keys consensus generate \ --gossip-keys \ --tls-keys \ --deployment "${SOLO_DEPLOYMENT}"PEM key files are written to

~/.solo/cache/keys/.Example output:

******************************* Solo ********************************************* Version : 0.63.0 Kubernetes Context : kind-solo Kubernetes Cluster : kind-solo Current Command : keys consensus generate --gossip-keys --tls-keys --deployment solo-deployment ********************************************************************************** Initialize ✔ Initialize Generate gossip keys Backup old files ✔ Backup old files Gossip key for node: node1 ✔ Gossip key for node: node1 [0.2s] ✔ Generate gossip keys [0.2s] Generate gRPC TLS Keys Backup old files TLS key for node: node1 ✔ Backup old files ✔ TLS key for node: node1 [0.3s] ✔ Generate gRPC TLS Keys [0.3s] Finalize ✔ Finalize

4. Set Up Cluster with Shared Components

Install shared cluster-level components (MinIO Operator, Prometheus CRDs, etc.) into the cluster setup namespace:

solo cluster-ref config setup --cluster-setup-namespace "${SOLO_CLUSTER_SETUP_NAMESPACE}"Example output:

******************************* Solo ********************************************* Version : 0.63.0 Kubernetes Context : kind-solo Kubernetes Cluster : kind-solo Current Command : cluster-ref config setup --cluster-setup-namespace solo-cluster ********************************************************************************** Check dependencies Check dependency: helm [OS: linux, Release: 6.8.0-106-generic, Arch: x64] Check dependency: kind [OS: linux, Release: 6.8.0-106-generic, Arch: x64] Check dependency: kubectl [OS: linux, Release: 6.8.0-106-generic, Arch: x64] ✔ Check dependency: kind [OS: linux, Release: 6.8.0-106-generic, Arch: x64] ✔ Check dependency: helm [OS: linux, Release: 6.8.0-106-generic, Arch: x64] ✔ Check dependency: kubectl [OS: linux, Release: 6.8.0-106-generic, Arch: x64] ✔ Check dependencies Setup chart manager ✔ Setup chart manager [0.6s] Initialize ✔ Initialize Install cluster charts Install pod-monitor-role ClusterRole - ClusterRole pod-monitor-role already exists in context kind-solo, skipping ✔ Install pod-monitor-role ClusterRole Install MinIO Operator chart ✔ MinIO Operator chart installed successfully on context kind-solo ✔ Install MinIO Operator chart [0.8s] ✔ Install cluster charts [0.8s]

5. Deploy the Network

Deploy the Solo network Helm chart, which provisions the consensus node pods, HAProxy, Envoy, and MinIO:

solo consensus network deploy --deployment "${SOLO_DEPLOYMENT}"Example output:

******************************* Solo ********************************************* Version : 0.63.0 Kubernetes Context : kind-solo Kubernetes Cluster : kind-solo Current Command : consensus network deploy --deployment solo-deployment --release-tag v0.66.0 ********************************************************************************** Check dependencies Check dependency: helm [OS: linux, Release: 6.8.0-106-generic, Arch: x64] Check dependency: kind [OS: linux, Release: 6.8.0-106-generic, Arch: x64] Check dependency: kubectl [OS: linux, Release: 6.8.0-106-generic, Arch: x64] ✔ Check dependency: kind [OS: linux, Release: 6.8.0-106-generic, Arch: x64] ✔ Check dependency: helm [OS: linux, Release: 6.8.0-106-generic, Arch: x64] ✔ Check dependency: kubectl [OS: linux, Release: 6.8.0-106-generic, Arch: x64] ✔ Check dependencies Setup chart manager ✔ Setup chart manager [0.7s] Initialize Acquire lock ✔ Acquire lock - lock acquired successfully, attempt: 1/10 ✔ Initialize [0.2s] Copy gRPC TLS Certificates Copy gRPC TLS Certificates [SKIPPED: Copy gRPC TLS Certificates] Prepare staging directory Copy Gossip keys to staging ✔ Copy Gossip keys to staging Copy gRPC TLS keys to staging ✔ Copy gRPC TLS keys to staging ✔ Prepare staging directory Copy node keys to secrets Copy TLS keys Node: node1, cluster: kind-solo Copy Gossip keys ✔ Copy TLS keys ✔ Copy Gossip keys ✔ Node: node1, cluster: kind-solo ✔ Copy node keys to secrets Install monitoring CRDs Pod Logs CRDs ✔ Pod Logs CRDs Prometheus Operator CRDs - Installed prometheus-operator-crds chart, version: 24.0.2 ✔ Prometheus Operator CRDs [4s] ✔ Install monitoring CRDs [4s] Install chart 'solo-deployment' - Installed solo-deployment chart, version: 0.62.0 ✔ Install chart 'solo-deployment' [2s] Check for load balancer Check for load balancer [SKIPPED: Check for load balancer] Redeploy chart with external IP address config Redeploy chart with external IP address config [SKIPPED: Redeploy chart with external IP address config] Check node pods are running Check Node: node1, Cluster: kind-solo ✔ Check Node: node1, Cluster: kind-solo [24s] ✔ Check node pods are running [24s] Check proxy pods are running Check HAProxy for: node1, cluster: kind-solo Check Envoy Proxy for: node1, cluster: kind-solo ✔ Check HAProxy for: node1, cluster: kind-solo ✔ Check Envoy Proxy for: node1, cluster: kind-solo ✔ Check proxy pods are running Check auxiliary pods are ready Check MinIO ✔ Check MinIO ✔ Check auxiliary pods are ready Add node and proxies to remote config ✔ Add node and proxies to remote config Copy wraps lib into consensus node Copy wraps lib into consensus node [SKIPPED: Copy wraps lib into consensus node] Copy block-nodes.json ✔ Copy block-nodes.json [1s] Copy JFR config file to nodes Copy JFR config file to nodes [SKIPPED: Copy JFR config file to nodes]

6. Set Up Consensus Nodes

Download the consensus node platform software and configure each node:

export CONSENSUS_NODE_VERSION=v0.66.0 solo consensus node setup \ --deployment "${SOLO_DEPLOYMENT}" \ --release-tag "${CONSENSUS_NODE_VERSION}"Example output:

******************************* Solo ********************************************* Version : 0.63.0 Kubernetes Context : kind-solo Kubernetes Cluster : kind-solo Current Command : consensus node setup --deployment solo-deployment --release-tag v0.66.0 ********************************************************************************** Load configuration ✔ Load configuration [0.2s] Initialize ✔ Initialize [0.2s] Validate nodes states Validating state for node node1 ✔ Validating state for node node1 - valid state: requested ✔ Validate nodes states Identify network pods Check network pod: node1 ✔ Check network pod: node1 ✔ Identify network pods Fetch platform software into network nodes Update node: node1 [ platformVersion = v0.66.0, context = kind-solo ] ✔ Update node: node1 [ platformVersion = v0.66.0, context = kind-solo ] [3s] ✔ Fetch platform software into network nodes [3s] Setup network nodes Node: node1 Copy configuration files ✔ Copy configuration files [0.3s] Set file permissions ✔ Set file permissions [0.4s] ✔ Node: node1 [0.8s] ✔ Setup network nodes [0.9s] setup network node folders ✔ setup network node folders [0.1s] Change node state to configured in remote config ✔ Change node state to configured in remote config

7. Start Consensus Nodes

Start all configured nodes and wait for them to reach ACTIVE status:

solo consensus node start --deployment "${SOLO_DEPLOYMENT}"Example output:

******************************* Solo ********************************************* Version : 0.63.0 Kubernetes Context : kind-solo Kubernetes Cluster : kind-solo Current Command : consensus node start --deployment solo-deployment ********************************************************************************** Check dependencies Check dependency: helm [OS: linux, Release: 6.8.0-106-generic, Arch: x64] Check dependency: kind [OS: linux, Release: 6.8.0-106-generic, Arch: x64] Check dependency: kubectl [OS: linux, Release: 6.8.0-106-generic, Arch: x64] ✔ Check dependency: kind [OS: linux, Release: 6.8.0-106-generic, Arch: x64] ✔ Check dependency: helm [OS: linux, Release: 6.8.0-106-generic, Arch: x64] ✔ Check dependency: kubectl [OS: linux, Release: 6.8.0-106-generic, Arch: x64] ✔ Check dependencies Setup chart manager ✔ Setup chart manager [0.7s] Load configuration ✔ Load configuration [0.2s] Initialize ✔ Initialize [0.2s] Validate nodes states Validating state for node node1 ✔ Validating state for node node1 - valid state: configured ✔ Validate nodes states Identify existing network nodes Check network pod: node1 ✔ Check network pod: node1 ✔ Identify existing network nodes Upload state files network nodes Upload state files network nodes [SKIPPED: Upload state files network nodes] Starting nodes Start node: node1 ✔ Start node: node1 [0.1s] ✔ Starting nodes [0.1s] Enable port forwarding for debug port and/or GRPC port Using requested port 50211 ✔ Enable port forwarding for debug port and/or GRPC port Check all nodes are ACTIVE Check network pod: node1 ✔ Check network pod: node1 - status ACTIVE, attempt: 16/300 [20s] ✔ Check all nodes are ACTIVE [20s] Check node proxies are ACTIVE Check proxy for node: node1 ✔ Check proxy for node: node1 [6s] ✔ Check node proxies are ACTIVE [6s] Wait for TSS Wait for TSS [SKIPPED: Wait for TSS] set gRPC Web endpoint Using requested port 30212 ✔ set gRPC Web endpoint [3s] Change node state to started in remote config ✔ Change node state to started in remote config Add node stakes Adding stake for node: node1 ✔ Adding stake for node: node1 [4s] ✔ Add node stakes [4s] Stopping port-forward for port [30212]

8. Deploy Mirror Node

Deploy the Hedera Mirror Node, which indexes all transaction data and exposes a REST API and gRPC endpoint:

solo mirror node add \ --deployment "${SOLO_DEPLOYMENT}" \ --cluster-ref kind-${SOLO_CLUSTER_NAME} \ --enable-ingress \ --pingerThe

--pingerflag keeps the mirror node’s importer active by regularly submitting record files. The--enable-ingressflag installs the HAProxy ingress controller for the mirror node REST API.Example output:

******************************* Solo ********************************************* Version : 0.63.0 Kubernetes Context : kind-solo Kubernetes Cluster : kind-solo Current Command : mirror node add --deployment solo-deployment --cluster-ref kind-solo --enable-ingress --quiet-mode ********************************************************************************** Check dependencies Check dependency: helm [OS: linux, Release: 6.8.0-106-generic, Arch: x64] Check dependency: kind [OS: linux, Release: 6.8.0-106-generic, Arch: x64] Check dependency: kubectl [OS: linux, Release: 6.8.0-106-generic, Arch: x64] ✔ Check dependency: kind [OS: linux, Release: 6.8.0-106-generic, Arch: x64] ✔ Check dependency: helm [OS: linux, Release: 6.8.0-106-generic, Arch: x64] ✔ Check dependency: kubectl [OS: linux, Release: 6.8.0-106-generic, Arch: x64] ✔ Check dependencies Setup chart manager ✔ Setup chart manager [0.6s] Initialize Using requested port 30212 Acquire lock ✔ Acquire lock - lock acquired successfully, attempt: 1/10 [0.1s] ✔ Initialize [1s] Enable mirror-node Prepare address book ✔ Prepare address book Install mirror ingress controller - Installed haproxy-ingress-1 chart, version: 0.14.5 ✔ Install mirror ingress controller [0.7s] Deploy mirror-node - Installed mirror chart, version: v0.149.0 ✔ Deploy mirror-node [3s] ✔ Enable mirror-node [4s] Check pods are ready Check Postgres DB Check REST API Check GRPC Check Monitor Check Web3 Check Importer ✔ Check Postgres DB [32s] ✔ Check Web3 [46s] ✔ Check REST API [52s] ✔ Check GRPC [58s] ✔ Check Monitor [1m16s] ✔ Check Importer [1m32s] ✔ Check pods are ready [1m32s] Seed DB data Insert data in public.file_data ✔ Insert data in public.file_data [0.6s] ✔ Seed DB data [0.6s] Add mirror node to remote config ✔ Add mirror node to remote config Enable port forwarding for mirror ingress controller Using requested port 8081 ✔ Enable port forwarding for mirror ingress controller Stopping port-forward for port [30212]

9. Deploy Explorer

Deploy the Hiero Explorer, a web UI for browsing transactions and accounts:

solo explorer node add \ --deployment "${SOLO_DEPLOYMENT}" \ --cluster-ref kind-${SOLO_CLUSTER_NAME}Example output:

******************************* Solo ********************************************* Version : 0.63.0 Kubernetes Context : kind-solo Kubernetes Cluster : kind-solo Current Command : explorer node add --deployment solo-deployment --cluster-ref kind-solo --quiet-mode ********************************************************************************** Check dependencies Check dependency: helm [OS: linux, Release: 6.8.0-106-generic, Arch: x64] Check dependency: kind [OS: linux, Release: 6.8.0-106-generic, Arch: x64] Check dependency: kubectl [OS: linux, Release: 6.8.0-106-generic, Arch: x64] ✔ Check dependency: kind [OS: linux, Release: 6.8.0-106-generic, Arch: x64] ✔ Check dependency: helm [OS: linux, Release: 6.8.0-106-generic, Arch: x64] ✔ Check dependency: kubectl [OS: linux, Release: 6.8.0-106-generic, Arch: x64] ✔ Check dependencies Setup chart manager ✔ Setup chart manager [0.7s] Initialize Acquire lock ✔ Acquire lock - lock acquired successfully, attempt: 1/10 ✔ Initialize [0.5s] Load remote config ✔ Load remote config [0.2s] Install cert manager Install cert manager [SKIPPED: Install cert manager] Install explorer - Installed hiero-explorer-1 chart, version: 26.0.0 ✔ Install explorer [0.8s] Install explorer ingress controller Install explorer ingress controller [SKIPPED: Install explorer ingress controller] Check explorer pod is ready ✔ Check explorer pod is ready [18s] Check haproxy ingress controller pod is ready Check haproxy ingress controller pod is ready [SKIPPED: Check haproxy ingress controller pod is ready] Add explorer to remote config ✔ Add explorer to remote config Enable port forwarding for explorer No port forward config found for Explorer Using requested port 8080 ✔ Enable port forwarding for explorer [0.1s]

10. Deploy JSON-RPC Relay

Deploy the Hiero JSON-RPC Relay to expose an Ethereum-compatible JSON-RPC endpoint for EVM tooling (MetaMask, Hardhat, Foundry, etc.):

solo relay node add \ -i node1 \ --deployment "${SOLO_DEPLOYMENT}"Example output:

******************************* Solo ********************************************* Version : 0.63.0 Kubernetes Context : kind-solo Kubernetes Cluster : kind-solo Current Command : relay node add --node-aliases node1 --deployment solo-deployment --cluster-ref kind-solo ********************************************************************************** Check dependencies Check dependency: helm [OS: linux, Release: 6.8.0-106-generic, Arch: x64] Check dependency: kind [OS: linux, Release: 6.8.0-106-generic, Arch: x64] Check dependency: kubectl [OS: linux, Release: 6.8.0-106-generic, Arch: x64] ✔ Check dependency: kind [OS: linux, Release: 6.8.0-106-generic, Arch: x64] ✔ Check dependency: helm [OS: linux, Release: 6.8.0-106-generic, Arch: x64] ✔ Check dependency: kubectl [OS: linux, Release: 6.8.0-106-generic, Arch: x64] ✔ Check dependencies Setup chart manager ✔ Setup chart manager [0.7s] Initialize Acquire lock ✔ Acquire lock - lock acquired successfully, attempt: 1/10 ✔ Initialize [0.4s] Check chart is installed ✔ Check chart is installed [0.1s] Prepare chart values Using requested port 30212 ✔ Prepare chart values [1s] Deploy JSON RPC Relay - Installed relay-1 chart, version: 0.73.0 ✔ Deploy JSON RPC Relay [0.7s] Check relay is running ✔ Check relay is running [16s] Check relay is ready ✔ Check relay is ready [21s] Add relay component in remote config ✔ Add relay component in remote config Enable port forwarding for relay node Using requested port 7546 ✔ Enable port forwarding for relay node [0.1s] Stopping port-forward for port [30212]

Cleanup

When you are done, destroy components in the reverse order of deployment.

Important: Always destroy components before destroying the network. Skipping this order can leave orphaned Helm releases and PVCs in your cluster.

1. Destroy JSON-RPC Relay

solo relay node destroy \

-i node1 \

--deployment "${SOLO_DEPLOYMENT}" \

--cluster-ref kind-${SOLO_CLUSTER_NAME}

2. Destroy Mirror Node

solo mirror node destroy \

--deployment "${SOLO_DEPLOYMENT}" \

--force

3. Destroy Explorer

solo explorer node destroy \

--deployment "${SOLO_DEPLOYMENT}" \

--force

4. Destroy the Network

solo consensus network destroy \

--deployment "${SOLO_DEPLOYMENT}" \

--force

2.2.4 - Dynamically add, update, and remove Consensus Nodes

Overview

This guide covers how to dynamically manage consensus nodes in a running Solo network - adding new nodes, updating existing ones, and removing nodes that are no longer needed. All three operations can be performed without taking the network offline.

Prerequisites

Before proceeding, ensure you have:

A running Solo network. If you don’t have one, deploy using one of the following methods:

- Quickstart - single command deployment using

solo one-shot single deploy. - Manual Deployment - step-by-step deployment with full control over each component.

- Quickstart - single command deployment using

Set the required environment variables as described below:

export SOLO_CLUSTER_NAME=solo export SOLO_NAMESPACE=solo export SOLO_CLUSTER_SETUP_NAMESPACE=solo-cluster export SOLO_DEPLOYMENT=solo-deployment

Key and Storage Concepts

Before running any node operation, it helps to understand two concepts that

appear in the prepare step.

Cryptographic Keys

Solo generates two types of keys for each consensus node:

- Gossip keys — used for encrypted node-to-node communication within the

network. Stored as

s-private-node*.pemands-public-node*.pemunder~/.solo/cache/keys/. - TLS keys — used to secure gRPC connections to the node. Stored as

hedera-node*.crtandhedera-node*.keyunder~/.solo/cache/keys/.

When adding a new node, Solo generates a fresh key pair and stores it alongside the keys for existing nodes in the same directory. For more detail, see Where are my keys stored?.

- Gossip keys — used for encrypted node-to-node communication within the

network. Stored as

Persistent Volume Claims (PVCs)

By default, consensus node storage is ephemeral - data stored by a node is lost if its pod crashes or is restarted. This is intentional for lightweight local testing where persistence is not required.

The

--pvcs trueflag creates Persistent Volume Claims (PVCs) for the node, ensuring its state survives pod restarts. Enable this flag for any node that needs to persist across restarts or that will participate in longer-running test scenarios.Note: PVCs are not enabled by default. Enable them only if your node needs to persist state across pod restarts.

Staging Directory

The